When you’re designing a solution for any problem, the first step is to define it. Be it a technical problem, or even a social problem. A clear understanding of the problem leads to good solutions. But to know if your solution works, you need to test it. As the name suggests, this is what software testing is for.

The first picture that comes to mind with the word software testing is that of someone trying to find a bug or a glitch in the software. But a major part of software testing is to see if the solution fulfils all the requirements. The software has to solve the problems and work in all conditions in which it is expected to

The first picture that comes to mind with the word software testing is that of someone trying to find a bug or a glitch in the software. But a major part of software testing is to see if the solution fulfils all the requirements. The software has to solve the problems it was designed to and work in all conditions in which it is expected to.

In short, software testing aims to make sure that the software performs according to the expectations of all the stakeholders involved.

Terms involved in testing

An error is a mistake in code.

Testing is the process of identifying defects.

And a defect is any variance between the expected result and the actual result.

A defect accepted by the development team is called a bug.

A build that doesn’t meet the requirements is a failure.

What is the need for software testing?

In some ways, if you ignore software testing, customer complaints could be the least of your worries. Software issues can cause result in loss of money, property, and even life. The history of software development is full of incidents in which software issues have resulted in disasters.

In April 1990, a Titan IVB Centaur was launching a military communication satellite. During guidance program development, the roll damping constant was entered as -0.199 instead of -1.99. Small minute error. But it caused the thruster to carry out unnecessary manoeuvres resulting in depletion of fuel. The result? Failure of a $1.2 billion satellite launch.

A famous or rather infamous case of software failure is that of Therac-25. Therac-25 was a computer-controlled radiation therapy machine developed by Atomic Energy of Canada Limited. In at least 6 documented incidents, the machine delivered almost 100 times the required dose and resulted in the death of at least 3 patients. Unlike the case of the Titan IVB rocket launch failure, in this case, it was not simple oops that cost a lot. Investigation revealed serious lapses in the software development cycle and a lack of sufficient safety testing. It was revealed that the software and hardware combo was never tested before it was assembled in a hospital.

There have been many instances in which product prices on online stores were accidentally reduced to a minimum. There are many other instances like this which reiterate the importance of software testing.

Principles of software testing

While there are many testing techniques, software, and methodologies, there are seven common principles that guide them.

Exhaustive software testing is not possible

Picture this: in a software, there are 15 input fields each of which accepts 5 possible values. The number of combinations you’ll have to test is now 515 = 30517578125. This example is a huge oversimplification, and even in a simple software, there will be a lot more combinations to test. Imagine if each of those input fields accepts 5 digit numbers, instead of a given 5 possible values?

So one of the main principles of software testing is that it is simply impossible to test all the possible combinations. If so, the resources for software development would be prohibitively huge.

Does that mean we simply don’t test software enough to save money? No. It just means that the testing will depend on the risk assessment. A video game crashing? Definitely, a problem, and will affect the ratings and can affect the reputation of the designer. But it’s not going to kill anyone. A ride in an amusement park not working properly or a nuclear centrifuge going haywire? A much bigger concern.

Instead of exhaustive testing, testing based on risk assessment is carried out. Mission-critical or safety-critical parts of the application are paid more importance during testing.

Defect clustering

Defect clustering is a principle in software testing that states that defects are not distributed evenly across an application. Most defects tend to cluster around a couple of features or modules of the application. This is similar to the Pareto principle, that 80% of consequences come from 20% of causes.

There are many reasons that could be attributed to this principle or observation. Sometimes you find a problem, and you fix it, which creates more problems. Sometimes there’s that one annoying section of legacy code that everyone’s just scared to touch.

By experience, it becomes easy to spot the problem areas from early on during the development stages and prevent them.

Pesticide paradox

In an agricultural field, if you apply the same pesticide again and again, gradually the pest in the field will gain resistance to it. This is the same reason for antibiotic-resistant microbes and why often hospitals appear to be the source of some highly resistant microbes.

You can observe this same thing in software testing too, albeit due to different reasons. You apply the same tests to a particular software over and over and soon the tests will stop yielding any results or finding any bugs. This is the pesticide paradox.

You run some tests on a version or build of the software, find some bugs and report it back to the developer team. They fix, you run the tests again, maybe you find some bugs this time. After you’ve done this a couple of times, odds are you won’t find any more bugs by running the same test cases.

Of course, you may still find some old bugs, but you are likely to miss some new bugs.

So to prevent this, you’ll have to come up with new test cases every so often.

Testing shows the presence of defects

The principle means that testing doesn’t show the absence of defects. You can run all sorts of tests on it, but it is likely that there will still be defects. And just because your tests don’t show them doesn’t mean there aren’t any defects. Odds are that you’ve missed something, rather than that the software is defect-free.

Absence of error fallacy

Even if your tests don’t show any defects, it doesn’t mean that your software is usable. It is possible that software that is almost completely error-free is not usable. This can happen if you test for the wrong requirement or if the requirement is not clear enough. Software that doesn’t solve the problem it was designed to, is not usable. If it doesn’t address the user concerns, or if it doesn’t help achieve the business goals, the software is not usable.

This brings us to the next principle

Early Testing

Testing should start early in the software development life cycle. Now how early in the life cycle? As early as possible. Start finding bugs when you get the requirement.

Rather than having a testing phase, consider it as an activity throughout. Catching a bug early can save a lot of time and resources.

Software testing is context dependant

Testing a game is not the same as testing an enterprise application. Testing an IoT application is not the same as testing a calculator app. Depending on the context, your testing methods and strategies will be different.

The different types of testing.

The different types of testing can be broadly classified into functional testing, non-functional testing, and maintenance testing.

Functional testing

Under functional testing comes unit testing, smoke/sanity testing, integration testing, user acceptance testing, etc. Here the various functions of the application are tested to see if it meets user criteria or specification. The outputs for different inputs are verified.

Non-functional testing

In non-functional testing, the reliability, scalability and other non-functional aspects of the system. The tests here include performance testing, load testing, usability testing, etc.

Maintenance testing

Maintenance testing is for deployed software. Maintenance testing is necessary when the software is changed or migrated to new hardware. In this case, any changes made are tested thoroughly. Also, changes may have affected the other sections of the software, so has to be tested too.

Software development lifecycle Vs Software Testing Life Cycle

The collection of requirements and specification from the client is the first phase in a typical software development cycle. Then during the design phase, the languages to be used, the software architecture, etc are planned, and during the build phase, these are implemented. The testing phase comes only after this, followed by the maintenance phase.

But this doesn’t always work out well, as testing may reveal huge issues which will have to be resolved with more resources.

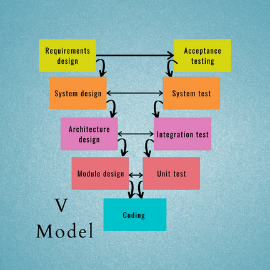

Here’s where the V model of software development comes up. Here, the test design happens along with software development. The acceptance test is designed during the requirement stage and the system test during the design phase.

During the architecture design phase, the architecture test design is done, and during the module design phase, the unit test is designed. And then once the software is built, the unit testing is followed by architecture testing, followed by system testing, and finally acceptance testing.